A Browser-Based, Open Source Tool for Alternative Communication

March 17, 2018

Originally published on CSS Tricks.

Shay Cojocaru contributed to this post.

Have you ever lost your voice? How did you handle that? Perhaps you carried a notebook and pen to scribble notes. Or jotted quick texts on your phone.

Have you ever traveled somewhere that you didn’t speak or understand the language everyone around you was speaking? How did you order food, or buy a train ticket? Perhaps you used a translation phrasebook, or Google translate. Perhaps you relied mostly on physical gestures.

All of these solutions are examples of communication methods — tools and strategies — that you may have used before to solve everyday communicative challenges. The preceding examples are temporary solutions to temporary challenges. Your laryngitis cleared up. You returned home, where accomplishing daily tasks in your native tongue is almost effortless. Now imagine that these situational obstacles were somehow permanent.

I grew up knowing the challenges and creativity needed for effective communication when verbal speech is impeded. My younger sister speaks one word: “mama.” When we were little, I remember our mom laying a white sheet over a chair to take pictures of everyday items — an apple, a fork, a toothbrush. She painstakingly printed, cut out, laminated, and organized these images for my sister to use to point at what she wanted to say. We carried her words in plastic baggies.

As we both grew up, and technology evolved, her communication options expanded exponentially. From laminated paper, to a proprietary touchscreen device with text-to-speech functionality, to a communication app on the iTouch, and later the iPad.

Different people experience difficulty verbalizing speech for a multitude of reasons. As in my sister’s case, sometimes it’s genetic. Sometimes it’s situational. The reasons may be temporary, chronic, or permanent. Augmentative and alternative communication (AAC) is an umbrella term encompassing various communication methods used to supplement or replace speech. The United States Society for Augmentative and Alternative Communication (USAAC) defines AAC-devices as including “all forms of communication (other than oral speech) that are used to express thoughts, needs, wants, and ideas.” The fact that you’re reading these words is an example of AAC — writing is a mechanism that augments my verbal communication.

The range of communication solutions people employ are as varied as the reasons they are needed. Examples range from printed picture grids, to text-to-speech apps, to switches which enable typing using morse code, to software that tracks eye movement or detects facial movements. (The software behind Stephen Hawking’s AAC is open source!)

The Convention on the Rights of Persons with Disabilities (CRPD), an international human rights treaty intended to protect the rights and dignity of people with disabilities, includes accessibility to communication and information. Huge challenges exist in making this access universal. Current solutions can be prohibitively expensive. According to the World Health Organization, in many low-income and middle-income countries, only 5-15% of the people who need assistive devices and technologies are able to obtain them. Additionally, many apps come in a limited number of languages. Many require a specific app store or proprietary device. Commercial AAC solutions can be expensive, and/or have limited language support, which can render them largely inaccessible to many people in low-income countries.

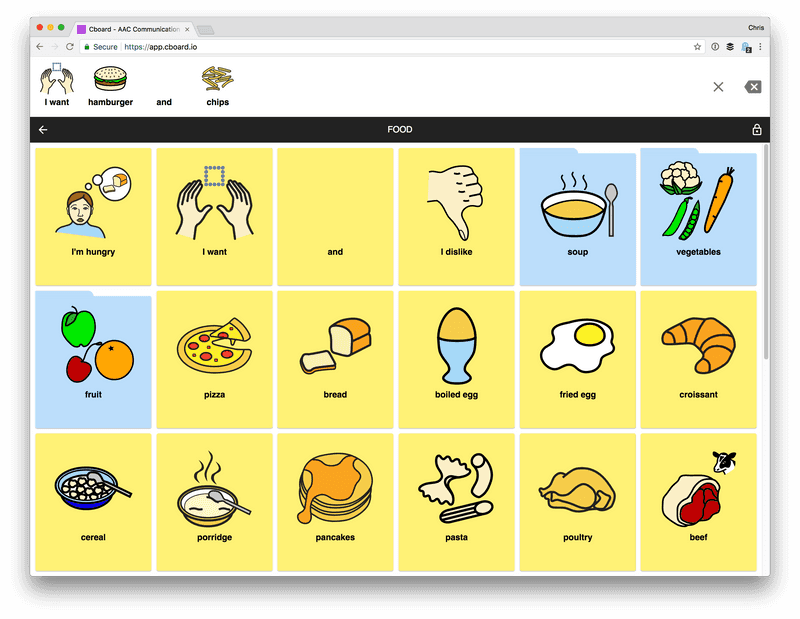

Enter Cboard, an open source project (recently supported by the UNICEF Innovation Fund!) powered by people dedicated to the idea of providing a solution that works for everyone, everywhere; a free, web-based communication board that leverages the thriving open source ecosystem and the built-in functionality of modern browsers.

It’s a complex problem, but, by taking advantage of available open source software and key ways in which the web has evolved over the last couple of years (in terms of modern browser API development and web standards), we can build a free, multilingual, open source, web-based alternative. Let’s talk about a few of those pieces — the Web Speech API, React, the Internationalization API, and the “progressive web app” concept.

Web Speech API

The challenge of artificially producing human speech is not new. Speech recognition and synthesis tools have been available for quite some time already — from voice dictation software to accessibility tools like screen readers. But the availability of a browser-based API makes it possible to start looking toward producing web services that have a low barrier to entry to provide speech synthesis, and that provide a consistent experience of that speech synthesis.

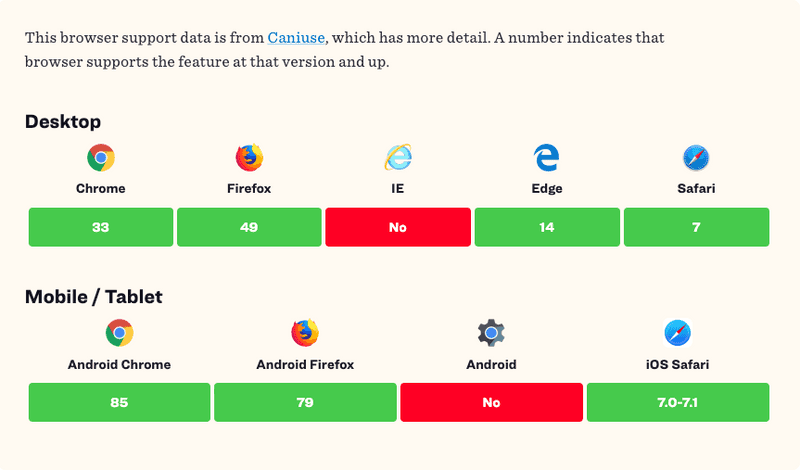

The Web Speech API provides an interface for speech recognition (speech-to-text) and speech synthesis (text-to-speech) in the browser. With Cboard, we are primarily concerned with the SpeechSynthesis interface, which is used to produce text-to-speech (TTS) output. Using the API, we can retrieve information about the synthesis voices available on the device (which varies by browser and operating system), start and pause speech, etc. Browsers tend to use the speech services available by default on the device’s operating system — the API exposes methods to interact with these services. We’ve done our own mapping of some of the voice and language offerings by digesting data returned from the SpeechSynthesis interface on different devices running different operating systems, using different browsers:

You can see, for example, Chrome on MacOS shows 66 voices — that’s because it uses MacOS native voices, as well as 19 additional voices provided from the browser. (Interested to see what voices and languages are available to you? Check out browserspeechsupport.me.)

Comprehensive support for the Web Speech API is still getting there, but most major modern browsers support it. (The Speech Synthesis API is available to 78.81% of users globally at time of writing). The draft specification was introduced in 2012, and is not yet a standard.

React

React is a JavaScript library for building user interfaces. One of the most unambiguous insights from the 2017 “State of JavaScript” — a survey of over 23,000 developers — was that React is currently the “dominant front-end library” in terms of sheer numbers, and with high marks for usage level and developer satisfaction.

That doesn’t mean it’s the best for every situation, and it doesn’t mean it will be dominant in the long-term. But its features, and the relative ubiquity of adoption (at this point, at least), make it a great option for our project, because there is a lower barrier to entry for people to begin contributing — there is a strong community for learning and troubleshooting.

React makes use of the concept of the “virtual” DOM, where a virtual representation of UI is kept in memory. Any changes to the state of your application are compared against the state of the “real” DOM, using a “diffing” algorithm. This allows us to make efficient changes to the view layer of an application, and represent the state of our application in a predictable way, without requiring manual DOM manipulation (for the most part). React also emphasizes the use of component-based architecture.

React is supported by all popular browsers. (For some older browsers like IE 9 / IE 10, polyfills are required).

ECMAScript Internationalization API

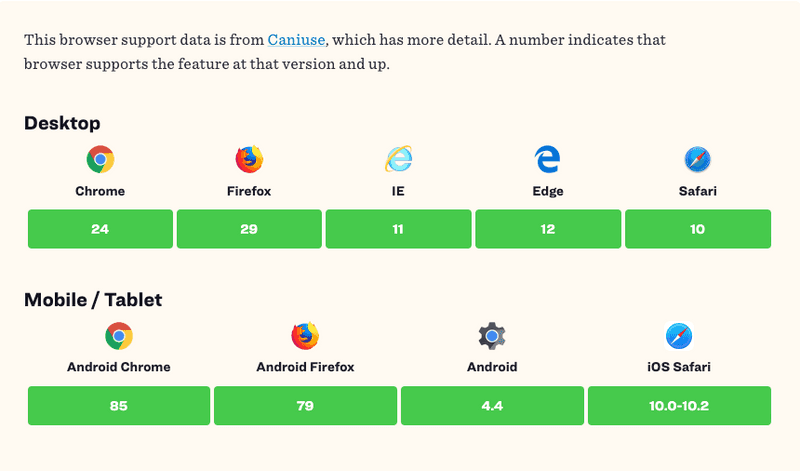

As noted earlier, one area in which current AAC offerings fall short is broad multi-language support. The combination of the Web Speech API, the Internationalization API (and the open source offerings around it), and React, allow us to support up to 33 languages. (For reasons described earlier, this support varies between operating systems).

Internationalization is the process of designing and developing an application and its content “in a way that ensures it will work well for, or can be easily adapted for, users from any culture, region, or language.” The Internationalization API provides functionality for three key areas: string comparison, number formatting, and date and time formatting. The API is exposed on the global Intl object.

FormatJS, a collection of libraries that build on the Intl standard, has a suite of integrations with common component libraries (like React!) that ship with the FormatJS core libraries built in. We use the React integration, react-intl, which provides bindings to internationalize React apps.

Most browsers in the world support the ES Intl API (84.16% of users globally at time of writing).

Progressive Web Apps

Progressive Web Apps (PWAs) are regular websites that take advantage of modern browser features to deliver a web experience with the same benefits (or even better ones) as native mobile apps. Any website is technically a PWA if it fulfills three requirements: it runs under HTTPS, has a Web App Manifest, and has a service worker. A service worker essentially acts as a proxy, sitting between web applications, the browser, and the network. It runs in the background, deciding to serve network or cached content based on connectivity, allowing for management of an offline experience.

Beyond those three base requirements, things get a bit more murky. When Alex Russell and Frances Berriman introduced and named “progressive web app” they enumerated ten attributes that characterize a PWA — responsive, connectivity independent, app-like, fresh, safe, discoverable, re-engageable, installable, and linkable.

This concept ends up as the key puzzle piece in our attempt to build something that solves the problems described earlier — that most existing AAC solutions can be prohibitively expensive, offer limited languages, or remain stuck in an app store or proprietary device. Using the PWA approach we can tie together the features modern browsers have to offer — the Web Speech API, Internationalization API, etc — coupled with an app-like experience regardless of operating systems, un-beholden to centralized app distribution methods, and with support for seamlessly continued offline use.

Learn more

The current state of the web provides all the foundational ingredients we need to build a more inclusive, more broadly accessible AAC solution for people around the world. In the spirit of the open web, and with a great nod to the work that has been done to codify web standards, we know that a free and open communication solution is in sight.

Sound interesting to you? We invite you to come take a look and perhaps even dig in as a contributor!

References

- http://blog.teamtreehouse.com/getting-started-speech-synthesis-api

- https://w3c.github.io/speech-api/speechapi.html#tts-section

- https://developer.mozilla.org/en-US/docs/Web/API/SpeechSynthesis

- https://www.sitepoint.com/introducing-web-speech-api/

- https://hacks.mozilla.org/2016/01/firefox-and-the-web-speech-api/

- https://hacks.mozilla.org/2014/12/introducing-the-javascript-internationalization-api/

- https://julian.is/article/progressive-web-apps/

- http://alistapart.com/article/yes-that-web-project-should-be-a-pwa

- https://adactio.com/journal/12461

- https://medium.com/@slightlylate/progressive-apps-escaping-tabs-without-losing-our-soul-3b93a8561955